“For decades, we’ve relied on the ‘bit’ that is simple 1 or 0 to build our modern world. But as our problems become more complex, from climate modeling to global logistics, our most powerful silicon chips are reaching their physical limits. Quantum Computing isn’t just a faster version of what we have; it’s a fundamental rethink of information itself. By harnessing the power of atoms and subatomic particles, we are entering an era of ‘Exponential Intelligence.’ This post breaks down the core pillars of qubits, superposition, and entanglement to explain why the quantum race is the most important technological competition of the 21st century.”

It uses the principles of quantum mechanics, superposition, entanglement, and interference to perform certain specific calculations exponentially faster than classical computers. Instead of processing binary bits (0 or 1) like in a classical computer, quantum computers use qubits that can represent 0, 1, or both at a time.

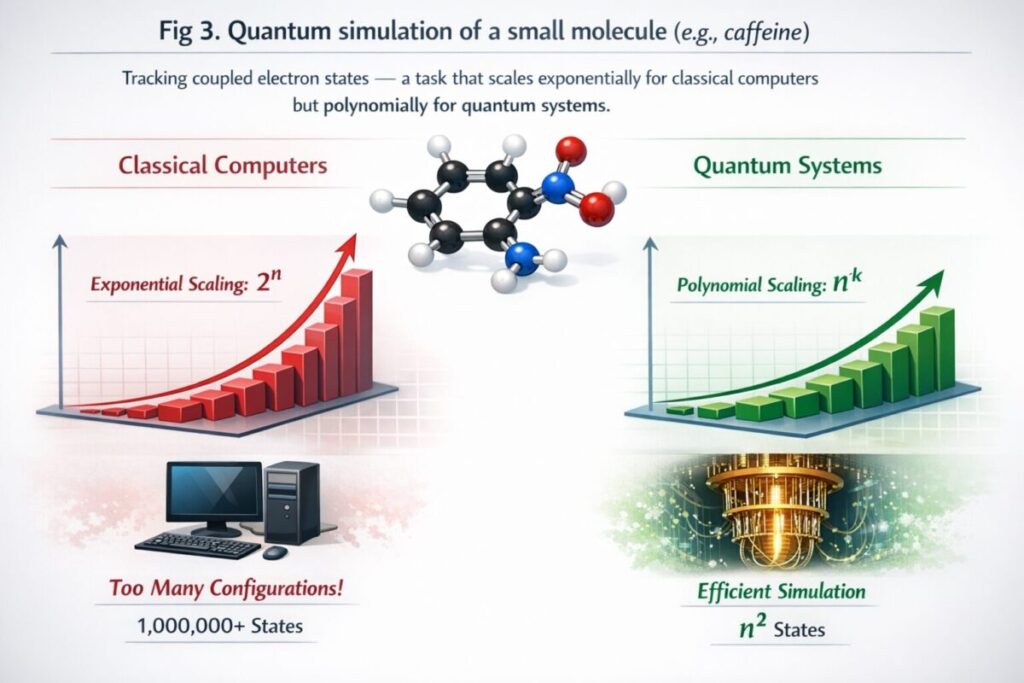

This doesn’t make quantum machines universally faster. They excel at specific problem types: simulating molecules, breaking cryptographic codes, optimising large systems, and training certain machine learning models, tasks where classical hardware hits fundamental limits.

Classical computers encode information as bits that are 0 or 1, transistors that switch between two voltage states. Modern chips pack over 100 billion transistors into a space the size of a fingernail. But even at that density, certain problem classes explode in complexity.

Simulating a molecule with 50 electrons requires tracking 250 possible quantum states simultaneously, roughly a quadrillion combinations. A classical supercomputer would need more memory than exists on Earth. A sufficiently powerful quantum computer solves it natively, because quantum mechanics is its native language.

The bottleneck isn’t processor speed, it’s the fundamental way classical bits represent state. Quantum computing is not about building a faster computer; it’s about building a different kind of computer for different types of problems.

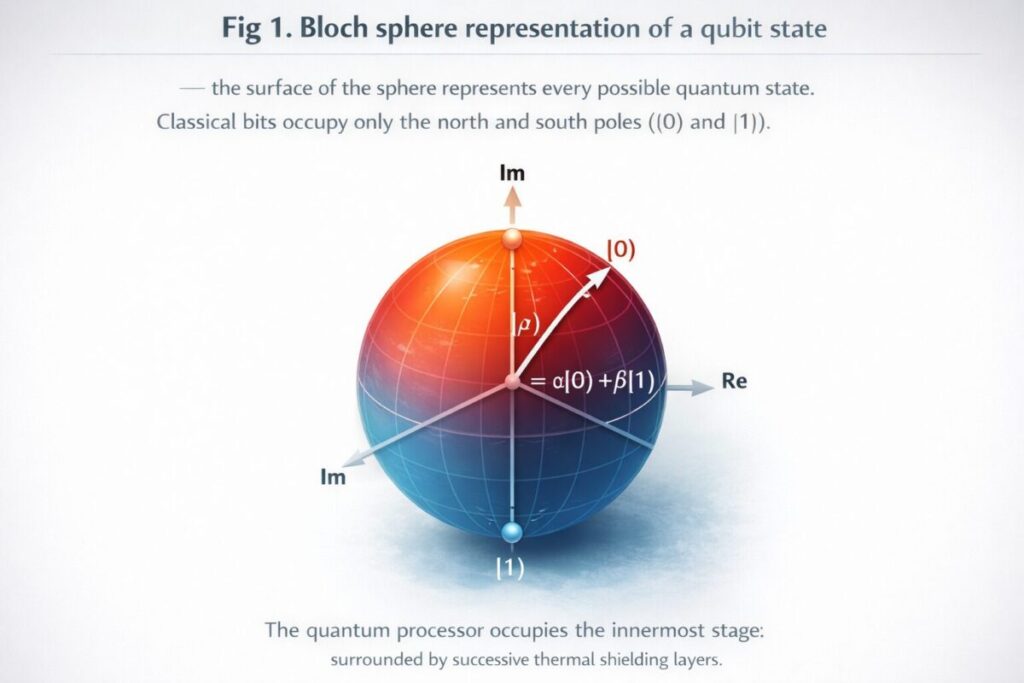

A classical bit is always either 0 or 1. A qubit exists in a probability distribution across both states until measured, this is superposition.

A qubit can be implemented using several physical systems. The most advanced quantum processors today use one of three dominant approaches:

0 or 1:

Classical Bit

Deterministic. Always one state.

α|0⟩ + β|1⟩:

Qubit

Probabilistic superposition until measured.

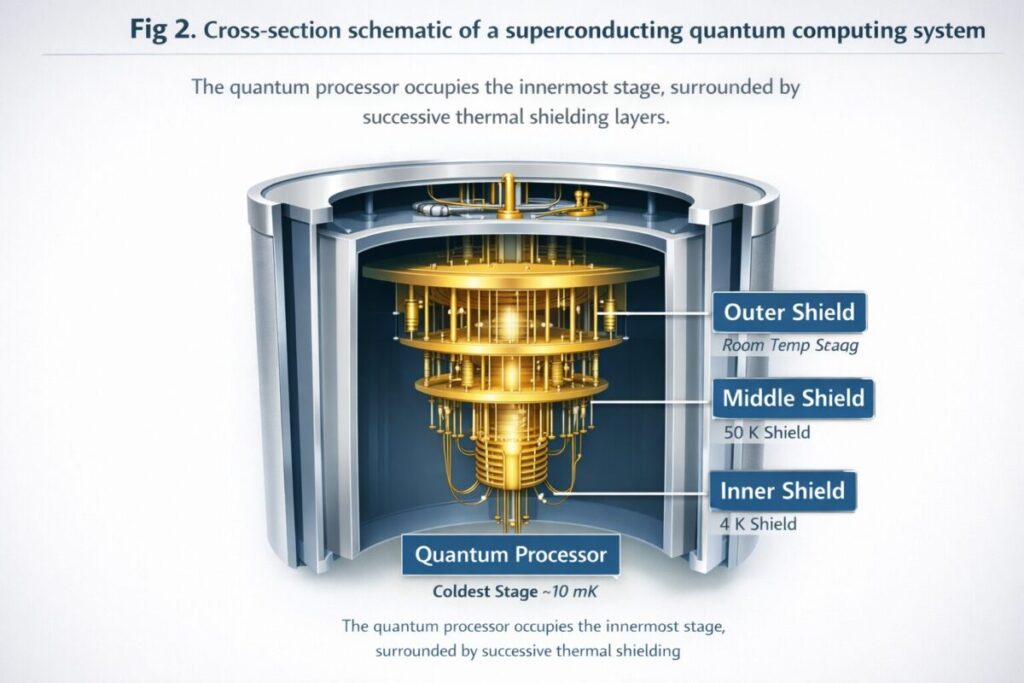

Used by Google and IBM, superconducting qubits are very small circuits cooled to near absolute zero (≈15 millikelvin, colder than deep space). At this temperature, electrical resistance disappears, and quantum effects dominate. These qubits are fast, gate operations complete in nanoseconds, but they decohere quickly, limiting the number of operations before errors accumulate.

IBM’s quantum processors, accessible through the IBM Quantum Experience (IBM Q Experience), use superconducting qubits. Their IBM Qiskit framework lets anyone write quantum programs in Python and run them on real hardware.

IonQ and Quantinuum use individual ions (electrically charged atoms) suspended in electromagnetic fields. Trapped ion qubits have far longer coherence times than superconducting systems and lower error rates per gate, but they’re slower and trade off: fewer, higher-quality qubits versus many faster, noisier ones. For precision-demanding tasks such as quantum chemistry, trapped-ion systems often outperform larger superconducting counterparts.

PsiQuantum and Xanadu encode qubits in photons (particles of light). Photons don’t require cryogenic cooling and are naturally suited for quantum communication and cryptography. The engineering challenge is creating reliable single-photon sources and detectors at scale.

| Technology | Key Player | Coherence Time | Gate Speed | Best For |

|---|---|---|---|---|

| Superconducting qubits | IBM, Google | Microseconds | ~10–50 ns | Speed, scale-up |

| Trapped ion | IonQ, Quantinuum | Minutes–hours | ~1–10 µs | Accuracy, chemistry |

| Photonic | PsiQuantum, Xanadu | No decoherence in transit | Light speed | Communication, QKD |

| Topological (experimental) | Microsoft | Theoretically very long | TBD | Fault tolerance |

When a qubit is in superposition, it simultaneously explores multiple computational paths. With n qubits in superposition, a quantum computer can represent 2n states at once. A 300-qubit register can represent more states than there are atoms in the observable universe, though reading out that information requires careful algorithm design.

“Superposition doesn’t mean a qubit is ‘both 0 and 1.’ It means the qubit’s state is a probability amplitude, a complex number across all possible outcomes. Measurement collapses this to a definite value.”

Two entangled qubits share a quantum state such that measuring one instantly determines the state of the other, regardless of distance. Entanglement is the mechanism by which quantum computers coordinate computation across many qubits simultaneously. It’s not magic, it’s a correlation established at the point of entanglement and used algorithmically to amplify correct answers.

Think of a quantum computer as a highly tuned instrument that plays all possible solution paths simultaneously, then amplifies the harmonics that correspond to correct answers and cancels the noise from wrong ones. Measurement captures the dominant harmonic.

Superconducting quantum processors are suspended inside layered dilution refrigerators that maintain temperatures of ~15 millikelvin, essential for eliminating thermal noise that would destroy qubit coherence.

A real quantum computer is not a box on a desk. A superconducting quantum computing system includes:

1,121

IBM Condor – qubits in their 2023 processor

72

Google Sycamore qubits (quantum supremacy demo)

15 mK

Operating temperature of superconducting processors

<0.5%

Two-qubit gate error rate (best trapped ion systems)

Most accessible ecosystem. The IBM Q Experience (now IBM Quantum Platform) gives free cloud access to real processors. IBM Qiskit is the world’s most-used quantum SDK with 550,000+ registered users.

Google’s quantum computer Sycamore achieved “quantum supremacy” in 2019 by completing in 200 seconds a task estimated to take a classical supercomputer 10,000 years. Their Willow chip (2024) reached new error-correction milestones.

The D-Wave quantum computer uses a different paradigm, quantum annealing, optimised for combinatorial optimisation. Used by Volkswagen for traffic optimisation and by Lockheed Martin for aerospace verification.

Microsoft is pursuing topological qubits using Majorana fermions, a fundamentally different architecture that theoretically enables fault-tolerant computation with fewer physical qubits. Azure Quantum offers cloud access to multiple hardware providers.

Publicly traded quantum computing company. IonQ’s trapped ion systems have the highest “algorithmic qubit” counts per reported gate fidelity. Partners with Amazon Braket, Azure Quantum, and Google Cloud.

QCi focuses on near-term quantum optimisation applications using photonic and entropy quantum computing systems. Targets government, financial, and logistics clients with near-term, hardware-agnostic solutions.

QPiAI is an emerging Indian quantum computing startup focused on building quantum programming tools and simulation platforms for the South Asian research ecosystem. Though not yet at IBM or Google’s hardware scale, it reflects the growing global distribution of quantum research and quantum coding infrastructure.

Unlike classical programs that manipulate bits with logic gates, quantum programming constructs sequences of quantum gates applied to qubits. The most widely used frameworks:

The fastest path to writing and running real quantum code: open quantum.ibm.com, create a free account, open IBM Quantum Lab (a Jupyter environment), and run a basic Bell state circuit in Qiskit in under 10 minutes, on actual quantum hardware, not just a simulator.

Quantum computers can simulate molecular interactions at the quantum level, enabling precise modelling of protein folding and drug-binding mechanisms that classical methods approximate poorly.

Quantum simulation is the application closest to delivering near-term advantage. Companies like Biogen and Roche are partnering with quantum firms to model protein-ligand interactions. Classical drug simulation relies on approximations; quantum simulation models electron correlation exactly. Even a 50-logical-qubit fault-tolerant system could revolutionise the design of nitrogen fixation catalysts, potentially transforming agriculture and reducing industrial CO₂ emissions by hundreds of megatons annually.

Shor’s algorithm running on a fault-tolerant quantum computer would break RSA-2048 encryption in hours rather than the billions of years it would take classically. NIST finalised its first post-quantum cryptography standards in 2024 (CRYSTALS-Kyber, CRYSTALS-Dilithium, SPHINCS+), specifically designed to resist quantum attacks. Every organisation handling long-lived sensitive data should be assessing migration timelines now.

JPMorgan Chase and Goldman Sachs are among the financial institutions exploring quantum optimisation for portfolio risk modelling and derivatives pricing. The D-Wave quantum computer is already used by some trading firms for combinatorial optimisation tasks that require evaluating millions of simultaneous scenarios, though hybrid classical-quantum approaches dominate current production workflows.

Quantum machine learning (QML) sits at the intersection of quantum computing and AI. Quantum circuits can act as trainable kernel functions that classical machine learning cannot efficiently compute. PennyLane’s differentiable quantum circuits enable variational quantum eigensolvers (VQE) and quantum neural networks. Current results are promising for specific feature-map construction, but general QML advantage over classical deep learning remains an open research question.

Volkswagen ran a pilot with D-Wave to route 418 taxis in Beijing, minimising total travel time across a combinatorial search space that grows factorially with fleet size. The quantum annealer found high-quality solutions 10x faster than the classical solver for the same hardware budget. Similar approaches are being explored in airline scheduling and last-mile delivery routing.

Accurate quantum simulation of battery chemistry could accelerate the design of next-generation lithium-sulfur and solid-state batteries. Quantum computation and quantum information techniques also hold promise for modelling high-temperature superconductors, materials that could eliminate resistive energy losses in power grids worldwide.

We are in the NISQ (Noisy Intermediate-Scale Quantum) era, machines with 50 to 1000+ qubits but significant error rates. Fault-tolerant quantum computing (FTQC), which uses error-corrected logical qubits for reliable large-scale computation, is estimated to require millions of physical qubits and is 10–20 years away by most expert projections.

Classical computers process binary bits (0 or 1) using deterministic logic gates. Quantum computers process qubits that can exist in superposition (both 0 and 1 simultaneously), use entanglement to correlate qubit states, and employ quantum interference to amplify correct computational paths. This gives quantum systems exponential parallelism for specific problem types, not general speed.

A qubit is the quantum equivalent of a classical bit, but instead of being fixed at 0 or 1, it holds a probability amplitude across both values simultaneously, described mathematically as α|0⟩ + β|1⟩. Only when measured does it “collapse” to a definite 0 or 1, with probabilities determined by |α|² and |β|².

Yes. Google’s Sycamore and Willow processors are real superconducting quantum processors operating at millikelvin temperatures in Google’s Santa Barbara lab. Sycamore made global headlines in 2019 by claiming “quantum supremacy.” Google Willow (2024) improved on error correction in a way that was considered a significant breakthrough.

IBM Quantum Experience (IBM Q Experience) at quantum.ibm.com offers free access to real quantum processors and simulators. The platform uses IBM Qiskit, a Python SDK. Microsoft’s Azure Quantum and Amazon Braket also offer free tiers and simulators for learning quantum coding without upfront cost.

Quantum machine learning uses quantum circuits as computational kernels within classical ML pipelines. Variational quantum circuits (VQCs) can represent feature maps that are classically hard to simulate, potentially improving model expressiveness for specific data types. PennyLane (Xanadu) and IBM Qiskit’s Machine Learning module are leading platforms for QML research.

D-Wave uses quantum annealing, a specialised form of quantum computation optimised for combinatorial optimisation problems. Real-world use cases include traffic flow routing (Volkswagen), satellite image classification (NASA/Google), and supply chain scheduling. It is not a gate-model quantum computer and cannot run algorithms like Shor’s or Grover’s.